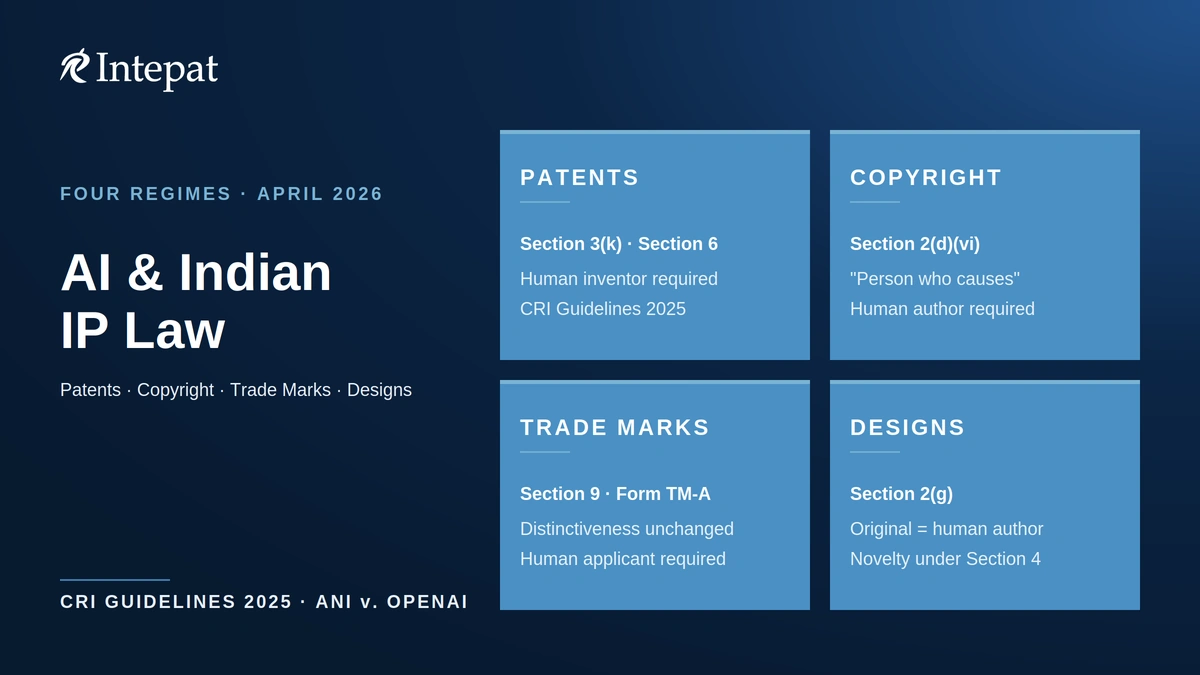

Indian law protects AI-related assets through four separate IP regimes: patents, copyright, trade marks and designs. Each has its own rules on what AI work qualifies and who owns it. The CRI Guidelines 2025 confirm AI cannot be named as the inventor, and AI-generated content needs a human “person who causes the work to be created” for copyright.

This article sets out the Indian intellectual property law for artificial intelligence in 2026, with comparative notes on the United States, United Kingdom and European Union only where the Indian framework leaves a material gap.

| Quick answers |

| Patent for an AI invention? File under Section 6 of the Patents Act with a human inventor named. Clear Section 3(k) by showing technical effect, following the framework set out in the CRI Guidelines 2025. Copyright in AI-generated content? Identify the human “person who causes the work to be created” under Section 2(d)(vi) of the Copyright Act. Registration is optional but useful for enforcement. Trade mark for an AI-related brand? File Form TM-A in a human or corporate applicant’s name. Distinctiveness is assessed under Section 9. AI tools speed up clearance and watch services but the application itself is still made by a person. Design for an AI-generated visual? Register under the Designs Act 2000 with a human author named as designer. Training data, weights, prompts? India has no statutory text-and-data-mining exception and no trade-secret statute. Protect via NDAs, employment IP assignment, and access controls. |

Intellectual property law for artificial intelligence in India: the four-regime view

Indian IP law was not written for AI. The Patents Act 1970, Copyright Act 1957, Trade Marks Act 1999 and Designs Act 2000 all assume a human or corporate creator, owner and applicant. AI changes none of the underlying statutory text in 2026, but the courts and the Patent Office have clarified how the existing rules apply to AI inventions, AI-generated content and AI-driven enforcement.

For an AI business in India, the practical question is which regime protects which asset. A machine-learning model that solves a technical problem may be patentable. The same model’s training output can be copyrightable, depending on how the human direction is structured. The brand around the product is protectable as a trade mark. The visual interface may qualify as a registered design. Training data, model weights and prompts sit outside all four regimes and depend on contract.

The CRI Guidelines 2025, notified by the Controller General of Patents, Designs and Trade Marks on 29 July 2025, are the most significant recent development. They consolidate jurisprudence built over the last decade on Section 3(k) and, for the first time, expressly address AI inventorship and the disclosure standards for AI/ML inventions. Earlier guidance from 2017 has been superseded by case law, and applicants still relying on it face examination outcomes that do not match the older framework.

The sections below run patents first, because that is where the CRI Guidelines 2025 do most of the work. Copyright next, because authorship of AI-generated content is the most contested live issue. Trade marks third, because AI is changing how marks are searched and enforced even though it changes nothing about who can register one. Designs last, briefly, because the rule is short. A separate section covers training data, weights and prompts.

Patents: Section 3(k), the CRI Guidelines 2025 and AI inventorship

Two separate questions sit inside any AI patent application in India. The first is whether the invention is excluded subject matter under Section 3(k), which says no patent will be granted for “a mathematical or business method or a computer program per se or algorithms”. The second is whether the inventor named on the application can be the AI system itself. The answers come from different sources, and an applicant who treats the two questions as the same risks losing on the easier one.

On the subject-matter question, the operative test is whether the invention shows technical effect or technical contribution. The Delhi High Court set this out in Ferid Allani v. Union of India (12 December 2019), and the test has been reaffirmed in a series of judgments from the Delhi and Madras High Courts since. The CRI Guidelines 2025 codify this case law and provide a step-wise assessment methodology with worked examples. For a deeper walkthrough of how the Indian Patent Office applies this framework to machine-learning inventions, see how AI/ML inventions are examined under Section 3(k).

Three points from the case law matter for practical drafting. OpenTV Inc v. Controller of Patents (Delhi HC, 11 May 2023) confirmed that the business-method limb of Section 3(k) is an absolute bar in India, with no “as such” qualifier of the kind that lets some software-based business methods through in the United Kingdom or before the European Patent Office. Raytheon v. Controller (Delhi HC, 15 September 2023) confirmed that novel hardware is not required to overcome the computer-program-per-se exclusion, despite earlier examination practice that often demanded it. Microsoft Technology Licensing v. Asst. Controller (Delhi HC, 16 April 2024) confirmed that the technical effect must be specific and credible, not a generic gain that any general-purpose computer would produce. The framework that draws all of this together is set out in the CRI Guidelines 2025 framework.

On the inventorship question, the CRI Guidelines 2025 are explicit. They distinguish AI-generated inventions, where the AI system creates the invention “autonomously, or with very limited human intervention”, from AI-assisted inventions, where AI is used as a tool by a human inventor. AI-generated inventions are not patentable, because under Section 6 only a “person” claiming to be the true and first inventor can apply, and the guidelines record that AI cannot be a person. AI-assisted inventions are not categorically excluded under Section 3(k) and remain patentable on ordinary criteria.

The Indian position is consistent with the position in every major jurisdiction that has decided the question. The UK Supreme Court reached the same conclusion in Thaler v. Comptroller-General in December 2023 by reading “inventor” in the Patents Act 1977 to require a natural person. The USPTO took the same view earlier, and the EPO Boards of Appeal did the same. For the comparative case-law line, see Intepat’s commentary on the DABUS line of inventor cases.

The disclosure standard for AI patents in India is now the dominant prosecution risk. The statutory standard is Section 10(4)(a), which requires the specification to sufficiently describe the invention and the method by which it is to be performed. Caleb Suresh Motupalli v. Controller of Patents (Madras HC, 29 January 2025) reinforces strict enablement scrutiny in the AI context: the court held that a specification which assembles published literature and gestures at an AI use case, without enabling reproduction by a person skilled in the art, fails Section 10(4)(a). The CRI Guidelines 2025 supply the examination-facing detail. Their worked examples expect specifications for AI/ML inventions to disclose the model architecture with structural detail, the training dataset’s characteristics, the pre-processing pipeline, training parameters and validation results that demonstrate the claimed technical effect. A specification that says what the model does without showing how it does it is unlikely to clear examination on the sufficiency ground.

| What this means in practice |

| An AI invention claim in India must (1) name a human inventor, (2) clear Section 3(k) by showing a specific and credible technical effect beyond mere automation, and (3) disclose enough technical detail for a skilled person to reproduce the invention. Drafting that meets all three is what differentiates a granted CRI patent from a refused one. |

Copyright: who owns AI-generated content under Section 2(d)(vi)

Indian copyright law has had a computer-generated-works rule since the 1994 amendment, and that rule still does the work in 2026. Section 2(d)(vi) of the Copyright Act provides that for any literary, dramatic, musical or artistic work that is computer-generated, the author is “the person who causes the work to be created”. India is one of the few major jurisdictions with an express statutory rule for computer-generated works. The United Kingdom has a comparable provision in Section 9(3) of the Copyright, Designs and Patents Act 1988, though its application to modern generative AI remains contested and the March 2026 UK Government Report proposed removing that protection. The United States has gone the other way through Copyright Office guidance and the Thaler litigation, refusing registration to fully autonomous AI outputs.

What Section 2(d)(vi) does not do is name a specific person. The phrase leaves room for the prompt author, the model operator, the model developer, the platform that hosts the model, or the user who curates the output. In a contested case the Indian courts have not yet ruled. In practice, the pre-existing tests from human-authored works carry across: originality requires “skill, labour and judgment” of more than a trivial kind, and the person who supplies that input is the one with the strongest claim. A user who prompts a generative model and accepts the first output without curation has weaker title than one who iterates, edits and arranges.

The only Indian administrative test of Section 2(d)(vi) in an AI context is the Suryast registration (ROC No. A-135120/2020). In November 2020 the Copyright Office registered Ankit Sahni and his AI tool “RAGHAV” as co-authors, after rejecting an earlier application naming the AI as sole author. A November 2021 withdrawal notice cited Sections 2(d)(iii) and 2(d)(vi); Sahni argued the Act contains no provision for post-grant withdrawal, and the registration continues on the records. Suryast is a regulatory signal of the uncertainty in Section 2(d)(vi), not a binding precedent.

A second issue is that Section 2(o) defines “literary work” to include computer programmes and computer databases. The model itself, as code, is a literary work, and authorship in the model is distinct from authorship in its outputs. The base model code will ordinarily belong to its human author or to the relevant employer or assignee under the Section 17 provisos; ownership of the outputs generated using the model is a different question, governed by Section 2(d)(vi).

For AI-generated artistic outputs specifically, including images and visual designs, see Intepat’s analysis of authorship in AI-generated art. The practical take-away for an AI business in India is that copyright registration is not mandatory but provides a useful evidentiary record under the Copyright Rules 2013, and that a well-drafted development or service agreement allocating authorship across developer, operator and user resolves most downstream disputes before they arise.

A separate misconception worth flagging: Indian copyright law does not say AI-generated content is in the public domain. It says a human must be the author. If the chain of human contribution can be traced, the work is protectable. If it cannot, the work is not unprotected because AI made it; it is unprotected because no author is identifiable. These are two different failure modes and they have different fixes.

Training data and Section 52: the gap the ANI v. OpenAI case will test

Indian copyright law in 2026 does not have a text-and-data-mining exception. Section 52 of the Copyright Act lists fair-dealing exceptions that include private or personal use including research, criticism or review, and reporting of current events. None of these were drafted with large-language-model training in mind. Indian copyright case law has historically interpreted Section 52 strictly as a closed list, leaving little room for courts to read in a new exception for AI training without a statutory amendment. Whether the existing research and reporting limbs reach LLM training is the live question now before the courts.

This is the gap that the ANI Media v. OpenAI litigation is testing. ANI Media filed suit in the Delhi High Court in November 2024 (CS(COMM) 1028/2024), alleging that OpenAI used ANI’s copyrighted news content to train ChatGPT without authorisation. The court framed four issues by order dated 19 November 2024: whether storage of copyrighted data for training is infringement; whether generating user responses from such data is infringement; whether either falls within Section 52; and whether the Delhi court has jurisdiction over a defendant headquartered in the United States. As of April 2026, Justice Amit Bansal has reserved orders on the interim-relief application after 32 hearings concluded on 27 March 2026; the judgment is expected to provide India’s first substantive judicial guidance on AI training and Indian copyright law. For a fuller account of the litigation and the AI music-industry parallel, see Intepat’s training-data infringement analysis.

Other jurisdictions have closed the same gap through different mechanisms. The European Union’s Digital Single Market Directive (2019/790) provides a TDM exception in Articles 3 and 4, with Article 3 applying to research organisations and Article 4 applying more broadly subject to a rightsholder opt-out. Japan’s Copyright Act Article 30-4 permits use of copyrighted works for non-enjoyment purposes including machine learning. Singapore’s Copyright Act 2021 contains a computational-data-analysis exception. The United States operates through the case-by-case fair-use doctrine in Section 107 of the US Copyright Act; the Thomson Reuters v. ROSS Intelligence judgment of 11 February 2025 (D. Del., Judge Bibas) was the first US court to reject a fair-use defence in an AI training case, on facts specific to a non-generative legal-research search engine.

For an Indian AI business, three practical points follow. First, the legal status of training on Indian copyrighted content remains unsettled and the ANI v. OpenAI judgment will substantially change the answer. Second, training on EU or Japanese sources does not import those statutory exceptions into Indian law; the territorial reach of an exception is limited to the jurisdiction whose statute creates it. Third, opt-out signals issued by Indian rights-holders (robots.txt, meta tags, contractual terms) are likely to become evidentially significant whichever way the Delhi High Court rules, because a documented opt-out narrows the scope of any implied licence argument.

The Parliamentary Standing Committee on Commerce first flagged the need for statutory amendment in its 161st Report (July 2021), recommending a separate IP category for AI inventions. The Department for Promotion of Industry and Internal Trade (DPIIT) constituted an eight-member expert committee in April 2025 to examine whether the Copyright Act 1957 adequately addresses generative AI. In December 2025 the committee published Part 1 of its Working Paper proposing a hybrid compulsory-licensing model, with public consultation closing on 7 January 2026. No statutory amendment has been notified as at April 2026. Until either Parliament acts on the Working Paper or the Delhi High Court rules, the safest assumption for an Indian AI developer is that scraping and training on Indian copyrighted content carries litigation risk that has not been priced into Indian fair-dealing case law.

Trade marks: distinctiveness for AI-generated marks and AI in enforcement

The Trade Marks Act 1999 has nothing specific to say about AI, and that is the right answer for most purposes. A trade mark application under Form TM-A is filed by a human or corporate applicant. The Registry assesses absolute grounds under Section 9 (distinctive character, descriptive marks, customary marks) and relative grounds under Section 11 (likelihood of confusion with earlier marks, including likelihood of association). Whether the mark itself was conceived by a human designer, a generative AI tool, or both, makes no statutory difference to registrability.

Two practical questions do change with AI in the loop. The first is distinctiveness. A mark that a human designer might never produce, because it does not read as a brand to a human eye, can be the cheapest output of an image-generation model. Distinctiveness under Section 9(1)(a) is judged from the perspective of the average consumer, not the average algorithm. A generative-AI logo that scores well on novelty metrics can still fail Section 9 if a consumer would not perceive it as a source identifier. The Section 9 test is unchanged; what has changed is the volume of marks now filed without any human design judgment between conception and submission.

The second is enforcement. AI-driven trademark search and watch services have become standard tooling for prosecution firms managing significant filing volumes, even as manual portal search remains the most common practice for smaller filers. Image similarity, phonetic similarity and conceptual similarity searches that took specialist firms days now complete in minutes. The cost-benefit equation for clearance searches before filing has shifted, and so has the expected speed of opposition once a third-party application is published. For how AI is reshaping search and watch workflows in particular, see Intepat’s analysis of AI-driven trademark workflows.

The unresolved question is liability. If an AI agent autonomously orders goods on an online marketplace and infringes a third-party mark in doing so, who is liable: the platform, the developer, the operator, or none of them? Indian doctrine on secondary liability and intermediary safe harbour was developed for a human commerce model. The early commentary on this point suggests a layered approach where contractual indemnities between platform and operator do most of the work, with statutory liability following the human party with the closest control. For the deeper analysis, see Intepat’s commentary on AI in trademark enforcement.

The doctrinal frame the courts will likely apply is the “average consumer with imperfect recollection” standard for confusion under Section 11. AI buyers do not have imperfect recollection. They have either perfect recall, no recall at all, or whatever is dictated by their training data. Whether and how this changes the test is open, and Indian courts have not yet had to decide it. For now, the safest assumption is that the standard remains the human consumer’s perspective, and that AI-mediated transactions are evaluated by reference to the human end-user the AI is acting for.

Designs: AI-generated visual outputs and the original-author requirement

Industrial design protection in India sits under the Designs Act 2000, and its key requirement for any AI-generated visual is that the design be “original”. Section 2(g) defines original as “originating from the author of such design”. On the better reading, originality still assumes a human authorial source. A design generated autonomously by a model with no human direction would face serious vulnerability at registration and cancellation stage, because the author concept does the same work it does in copyright and a non-human would not satisfy it. Indian courts have not yet ruled directly on AI-generated designs, so the safest working assumption is the human-author reading.

In practice, AI-generated industrial designs face the same authorship question as AI-generated artistic works under copyright. If a human designer prompts, curates, iterates and selects the output, the human is the author. If the model produces a design without human direction, no author exists for registration purposes. The Designs Manual treats the question of authorship at registration as a factual matter for the applicant to support, and a registration that names a person who did not contribute to the design is vulnerable to cancellation under Section 19.

The novelty bar under Section 4 of the Designs Act is also relevant for AI-generated outputs. A model trained on an industrial-design database may produce outputs that are statistical recombinations of prior published designs. Such an output is unlikely to clear the novelty test, even where the human who runs the prompt has done genuine selection and curation work. For AI-generated visuals intended for design registration, the practical workflow is to train or curate against design databases that exclude prior-published industrial designs in the relevant class, and to retain documentation of human selection at each step.

The overlap with copyright is worth flagging for any AI-generated visual that has both industrial application and artistic value. An artistic work that is industrially applied beyond fifty reproductions loses copyright protection under Section 15 of the Copyright Act unless it has been registered as a design. Where the same AI-generated image is intended for both an artistic catalogue and a product launch, design registration is the protection that survives industrial application.

Confidential information: contracts where statute is silent

India has no trade-secret statute. The protection of training data, model weights, prompts and proprietary fine-tuning approaches rests on the law of confidence, employment IP assignment, NDAs and access controls. Courts in India have recognised confidentiality protection through common-law principles and through equitable doctrine in cases involving customer lists, technical know-how and proprietary processes. The same principles apply to AI training corpora and model parameters.

The practical protection stack for an AI business in India is: (1) NDAs at every external boundary including with annotation vendors, evaluation contractors and red-teaming partners; (2) employment IP-assignment clauses that capture model improvements, prompts and fine-tuning datasets as work-for-hire output where the contract permits this; (3) access controls and audit logging that establish trade-secret-like reasonable measures of secrecy; and (4) where a model or weight set is licensed out, contractual restrictions on reverse engineering, fine-tuning and redistribution. None of these substitutes for statutory protection where it exists; all of them are necessary where it does not. For the broader interface between AI, IP and personal-data protection, see the data-privacy interface analysis.

Where India sits against the US, UK and EU on AI and IP

The four-jurisdiction comparison matters for an Indian AI business operating cross-border. The table below summarises the position on the four issues most likely to come up in cross-border AI/IP advisory work, as at April 2026.

| Issue | India | United States | United Kingdom | European Union |

| AI as named inventor in patent | Not permitted; CRI Guidelines 2025 confirm Section 6 requires a Person | Not permitted; USPTO 2024 inventorship guidance | Not permitted; UK SC in Thaler (Dec 2023) | Not permitted; EPO Boards of Appeal in DABUS (Dec 2021) |

| Authorship of AI-generated content | Section 2(d)(vi): person who causes the work to be created | Copyright Office: human-authorship required; Thaler DC litigation | CDPA s.9(3): person by whom arrangements are made; March 2026 UK Government Report proposed removing this protection | No EU-level statutory rule; member-state copyright law applies; EU AI Act 2024 imposes transparency on training data |

| Statutory TDM exception for AI training | None | None; case-by-case fair use; Thomson Reuters v. ROSS (Feb 2025) denied fair use on facts | s.29A CDPA: non-commercial research only; March 2026 Government Report did not adopt a broad TDM exception | DSM Directive 2019/790 Articles 3 (research) and 4 (general, opt-out) |

| Trade-secret statute | None; common-law confidence + contract | Defend Trade Secrets Act 2016 (federal); state UTSA statutes | Common-law confidence + Trade Secrets (Enforcement, etc) Regulations 2018 | Trade Secrets Directive 2016/943 implemented across member states |

The comparative pattern is consistent on inventorship: no jurisdiction of interest permits AI as inventor. The pattern diverges on training-data exceptions, where India and the US lack a statutory carve-out while the EU has codified one. The pattern diverges further on trade-secret protection, where India is the sole jurisdiction in this group without a statute. For an AI business operating in India alone, only the inventorship and authorship rules are operationally settled; the rest depends on contract.

What to file and where: a decision guide for AI businesses

The decision tree for protecting an AI asset in India runs by asset type, not by IP regime. The framework below works for most cases, with the caveat that high-value assets often warrant overlapping protection across regimes.

For a model architecture or training method that solves a specific technical problem with a measurable improvement over prior art, file a patent application under the Patents Act. Name a human inventor. Draft the specification to meet the CRI Guidelines 2025 disclosure standard set out above, and frame the claims to show technical effect in implementation, not in abstract algorithmic description.

For training data sets, model weights, prompts, fine-tuning datasets and other proprietary information that derives value from secrecy, do not file anything. Protect through NDAs, employment IP assignment, and access controls. Where a competitor has obtained your data through a former employee or vendor, the action is for breach of confidence and contract.

For documentation, code, marketing copy, AI-generated outputs you have curated, datasets you have organised with skill and judgment, and similar literary or artistic works, copyright protection arises automatically on creation. Registration under Form XIV is optional but evidentially useful. For computer-generated works, document the human contribution chain.

For the brand around the AI product (the name, the logo, the tagline), file a trade mark application under Form TM-A. Conduct an AI-augmented clearance search before filing. The application is in your company’s name; whether the brand assets were designed by a human or generated by an AI tool does not affect registrability. Distinctiveness under Section 9 is the gate.

For the visual interface or the product’s external aesthetic features, file a design registration under the Designs Act. Name a human author. Document the human contribution to the design, particularly where AI tools were used in the creative process.

For everything else (trade dress, proprietary processes, customer-facing AI-driven services), the protection is contractual and operational. Indian law does not have a residual catch-all statute for AI assets that fall between the four IP regimes.

| Filing decision summary Asset Primary protection Form / step |

| AI-assisted invention with technical effect Patent Form 1 + Form 5; clear Section 3(k) per CRI Guidelines 2025 Training data, weights, prompts Confidentiality NDAs, employment IP assignment, access controls Documentation, content, curated outputs Copyright Form XIV (optional registration) AI product brand Trade mark Form TM-A AI product visual interface Design Designs Act 2000 application; human author named |

Frequently asked questions

No. The CRI Guidelines 2025 confirm that under Section 6 of the Patents Act 1970, only a person can apply as the true and first inventor. AI-generated inventions, where the AI system creates the invention autonomously with very limited human intervention, are not patentable. AI-assisted inventions, where AI is used as a tool, remain patentable on ordinary criteria.

Under Section 2(d)(vi) of the Copyright Act 1957, the author is “the person who causes the work to be created”. The statutory position attributes authorship to a human, but the section does not name which human. In disputed cases, the person who supplied the skill, labour and judgment that shaped the work has the strongest title. Contractual allocation between developer, operator and user is what most disputes turn on.

Unsettled. Section 52 of the Copyright Act lists fair-dealing exceptions that include research and reporting of current events, and Indian case law has historically treated that list as a closed list. In ANI Media v. OpenAI (CS(COMM) 1028/2024), filed November 2024, the Delhi High Court reserved orders on the interim-relief application on 1 April 2026 after 32 hearings. The judgment, when delivered, will provide India’s first substantive guidance on AI training and Indian copyright law.

Yes, in principle. Trade mark registration under Form TM-A is filed in the applicant’s name and the Registry assesses distinctiveness under Section 9 from the perspective of the average consumer. Whether the mark was designed by a human or generated by AI is not a separate registrability test. The mark must still distinguish the applicant’s goods or services from those of others.

Yes, where a human author can be identified for the design. Section 2(g) defines “original” as “originating from the author of such design”, and the author concept requires a person. A design produced by a model without human direction has no statutory author. Documentation of human contribution to selection, prompt design, iteration and curation is what supports a valid registration.

No. India has no trade-secret statute as at April 2026. Protection of training data, model weights, prompts and proprietary fine-tuning rests on the common law of confidence, NDAs, employment IP-assignment clauses, and access controls.

The CRI Guidelines 2025, notified by the CGPDTM on 29 July 2025, replaced the 2017 Guidelines and codified the Section 3(k) jurisprudence developed by the Delhi and Madras High Courts since Ferid Allani (2019). The Guidelines distinguish AI-generated from AI-assisted inventions, raise disclosure expectations for AI/ML inventions, and provide a step-wise assessment methodology with worked examples.

Yes, where the application of the known architecture to a specific technical problem produces a specific and credible technical effect. The novelty and inventive-step assessments are conducted in the same way as for any other field of invention. The CRI Guidelines 2025 also expect disclosure of the dataset characteristics, training parameters and validation results that demonstrate the claimed technical effect.

The European Union has a statutory text-and-data-mining exception under Articles 3 and 4 of the Digital Single Market Directive 2019/790. India has no equivalent statutory exception. An Indian AI developer cannot import the EU exception by training on EU sources; the territorial reach of an exception is limited to the jurisdiction whose statute creates it. The Indian position turns on whether the existing fair-dealing limbs in Section 52 of the Copyright Act reach LLM training, which is the question pending in ANI Media v. OpenAI.

In most cases, yes, but for different assets. Patents protect the technical model where it shows a credible technical effect. Copyright protects code, documentation, marketing copy and curated outputs without filing. Trade marks protect the brand around the product. Designs protect the product’s visual interface. The decision to file is asset-by-asset, not regime-by-regime. Coordinated filing across regimes provides layered protection where the same asset has multiple protectable aspects.

Disclaimer

This article is for information only and does not constitute legal advice. The CRI Guidelines 2025 cited above were notified on 29 July 2025; readers should verify the current text on the CGPDTM website at https://ipindia.gov.in before relying on any specific provision. In the ANI Media v. OpenAI case (Delhi High Court, CS(COMM) 1028/2024), the court reserved orders on 1 April 2026; the eventual judgment will affect the Indian position on AI training data. For advice on a specific matter, consult a qualified Indian patent agent or IP counsel. Verified as of April 2026.